This is a great change, but I suspect a lot of people will still just check-check-next. Read on to see why this is bad for any version.

I've tried to keep this post to a minimum, but there's a lot to say. Please bear with me.

I know there has been a lot of griping about the new licensing model in SQL Server 2012. I understand the outcry; folks on high-end processors are going to end up paying much more for the same licenses, and some are even bypassing the upgrade because of it. My feeling is that we've had a relatively easy ride since hyper-threading was first stable enough to trust with SQL Server. I also feel that it is fair for us to be paying based on the power we're utilizing, regardless of how many cores are bundled together in each physical CPU. Essentially we're only now starting to pay for the computing power we've been taking advantage of for several years, and many of the other vendors have been much quicker about making their customers pay for this power.

That said, there is a lot of undue panic as well. I've heard many people relay presumptions that their licensing costs will now quadruple (or worse), because they thought that Microsoft was keeping the $27K Enterprise processor price tag and just applying it to each core instead. As I explain in my "What's New in SQL Server 2012" presentations, the new core licensing model doesn't hurt you if you've already been paying for Enterprise processor licenses on previous versions, unless you are deploying to high-end processors (more than 4 cores) or if you're continuing to use a processor with one or two cores (since you need to buy the licensing in pairs, and 4 cores is the minimum IIRC). The cost per core is almost exactly 25% of the previous per-processor license cost; meaning that if you have quad-core processors, you're paying about the same as you did before. And folks using certain AMD processors even get a bit of a break, if those CPUs are a fit for their environment, as Glenn Alan Berry outlines.

The Real Problem

The real problem with this release, in many eyes, is that Microsoft took away CAL licensing for Enterprise Edition. CAL obviously made it much cheaper, if your situation warranted it, since you paid by the user/device rather than by the number of cores. So anybody who has been using CAL licensing up to this point has a choice to make with SQL Server 2012: pony up the much higher licensing costs, or move down to Standard Edition (CAL or core-based) or Business Intelligence Edition (CAL only). The latter choices mean they give up all of those Enterprise features they've already been using – never mind the new ones that they probably want, like Availability Groups.

The Exception to the Rule

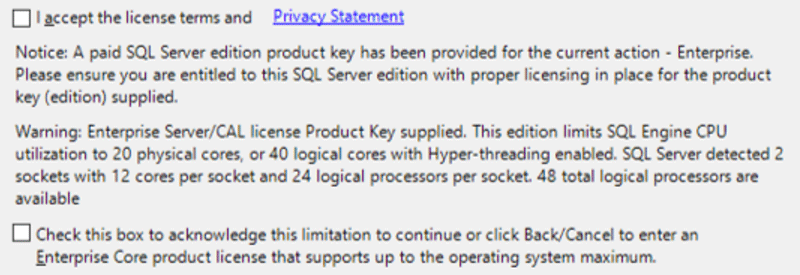

Now, there is an exception: if you have current software assurance (SA) for SQL Server 2008 R2, this allows you to slide into SQL Server 2012 while maintaining CAL licensing (which you can't do if you are buying new SQL Server 2012 licenses – those have to be core-based, always). Theoretically, upgrading with CAL licensing in place allows you to enjoy all the Enterprise Edition benefits of SQL Server 2012, while pushing off the much higher core-based licensing costs until the next version that you upgrade to. But there's an important catch in the way this exception works, that many customers seem to overlook. From http://www.microsoft.com/sqlserver/en/us/get-sql-server/licensing.aspx (hidden by default under the "Licensing by users – Server + CAL licensing"):

Existing Enterprise Edition licenses in the Server + CAL licensing model that are upgraded to SQL Server 2012 and beyond will be limited to server deployments with 20 cores or less. This 20 core limit only applies to SQL Server 2012 Enterprise Edition Server licenses in the Server + CAL model and will still require the appropriate number/versions of SQL Server CALs for access.

(Emphasis mine.) I know of at least one customer who rushed out to buy Software Assurance for SQL Server 2008 R2 CAL licensing for a handful of servers, with the assumption that he would be able to upgrade later to 2012 and keep the CAL licensing. He missed the footnote above and now realizes that his 48-core servers are actually not eligible for CAL licensing. SQL Server will install, of course, but it will only see 20 cores.

But it Gets Worse

Multiple customers *did* upgrade their servers through Software Assurance, and all have been surprised by the 20-core limit. One customer's server, in particular, showed serious performance degradation after the upgrade. Because they didn't know about the 20-core limit, they didn't realize that their performance was worse after upgrading simply because of it. The limit is not just a line item in your licensing agreement, SQL Server actually puts a hard limit on how many logical processors it will identify and use, which meant in this case that their 2008 R2 instance was using all 40 cores, but when they upgraded, it was only using 20. This was not the upgrade experience they expected.

So, why didn't they know about the 20-core limit?

- Their licensing rep didn't tell them. It's surprising to me that a licensing rep for a company would sell them upgrade licensing under a very specific clause, without knowing anything about their environment (or asking), or at the very least warning them about the limit. This seems to be a pretty important facet of the licensing exception.

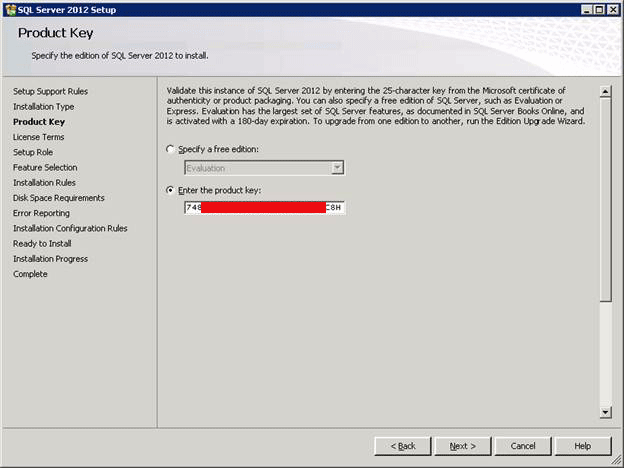

- Setup doesn't prompt them for anything. With Volume Licensing, you get pre-pidded installation binaries. You don't choose the licensing model or the number of processors/cores like you did in the old days. Here is an example of the edition choice screen for a VL version of setup at left (click to enlarge) compared to the old SQL Sevrer 2000 dialog on the right (click to enlarge):

At no point does the visible portion of setup mention or offer in any way that the server will be limited to 20 cores or that this product key does, in fact, represent CAL licensing. The person installing the software may have no idea what the licensing should be, and may be putting it on servers where it wasn't intended. I think Microsoft can do better in preventing these things from happening by putting some very obvious indication about what type of licensing is about to be installed.

- The footnote on the web site is not highly visible. I think that the exception for CAL-based licensing should be much more visible. As if licensing isn't a complex enough topic already, now customers have to deal with licensing restrictions that aren't well-publicized and that aren't disclosed when they're actually purchasing the licenses.

- The EULA does have some information, but it's way down, not trivial to decipher, and you have to actively scroll to find it. Here is what it says ("OSE" means "operating system environment"):

MICROSOFT SOFTWARE LICENSE TERMS

MICROSOFT SQL SERVER 2012 ENTERPRISE SERVER/CAL EDITION…about 70 lines of typical EULA mumbo-jumbo, then item 2.2 states (in part):

Running Instances of the Server Software. Once you have assigned the license to the server, you may run any number of instances of the server software in up to four OSEs (physical and/or virtual) on the licensed server at a time, provided that:

(a) if you are running the software in a physical OSE, the OSE may access up to 20 physical cores at any time

Which means that, even if you install multiple instances on the same OS, you won't be able to split your actual cores between instances – they can all see only the same 20 cores (and the rest will sit idle). It seems you can get around this somewhat by having four virtual machines, provided you can set affinity correctly, but any single VM still cannot make use of more than 20 cores.

I'm of course not going to suggest that it's a valid excuse that they didn't read the EULA. But this is the only place where they get a warning when working with the product itself or the licensing reps, who are supposed to ensure compliance?

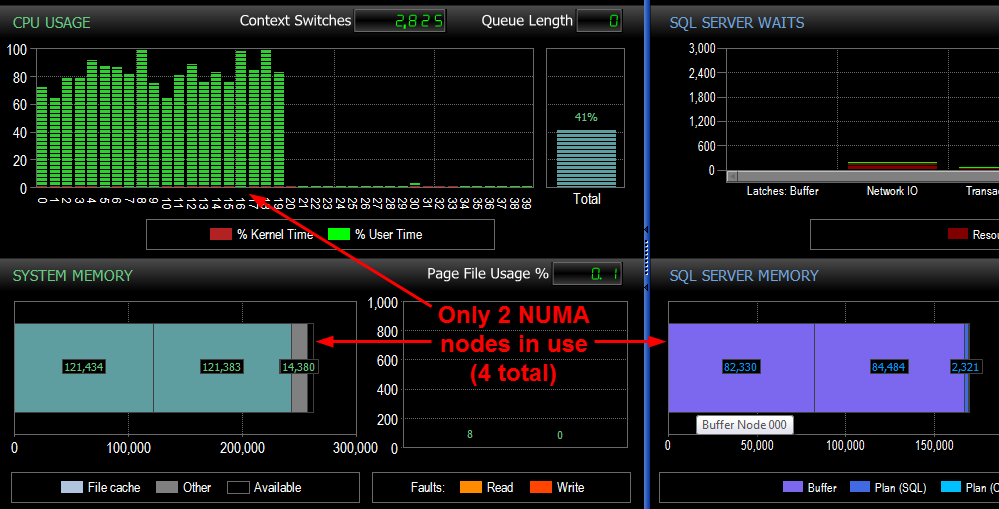

There were two clear indicators for this customer that they weren't utilizing all 40 cores. But they had to dig deeper than they should have to find this out.

- SQL Sentry breaks out Windows and SQL Server memory by NUMA node on its dashboard. The customer upgraded to v7 and immediately noticed that only two of the four nodes were showing up; SQL Server had automatically taken the other two nodes offline (click to enlarge):

The server was also suffering strange performance issues, which led to…

- …checking the SQL Server error log. In this case it actually tells you that the logical cores it is going to identify and use has been limited to 20:

04/24/2012 18:04:23,Server,Unknown,Detected 262133 MB of RAM. This is an informational message; no user action is required.

04/24/2012 18:04:23,Server,Unknown,SQL Server detected 4 sockets with 10 cores per socket and 10 logical processors per socket 40 total logical processors; using 20 logical processors based on SQL Server licensing. This is an informational message; no user action is required.

I wonder how long, without the odd symptoms in Performance Advisor, the customer would have spent assuming that SQL Server 2012 was simply not ready for production? How long before they would have spotted that message in the error log? How long would it take to get this information out of their licensing rep, if they hadn't already been armed with it due to their own digging?

The issue I see with all of this is that most customers won't see this issue until they move to production. Why?

- Many customers have budgets that are spread thin enough (especially with the increased costs of core-based licensing) that they can't always afford a test or QA machine with the same number of processors as their production machines. So when they run their tests against a 16-core box, they expect the production box to perform better, and to not have flaky symptoms due to SQL Server thinking that some NUMA nodes are only partially online or completely offline. And there is no way for them to come across this 20-core limitation until they deploy to production. This is not an excuse for having insufficient hardware in test and QA environments, but the reality is that most customers can't do it.

- Even for those customers that *do* have big enough hardware in their QA/test environments, I haven't verified, but I can only assume that the MSDN versions of the software (which most customers use to test their deployments in non-production environments) do not have these built-in limits of 20 cores. If they do, that's a real problem for all of the other customers. If you test on the MSDN version (which also doesn't let you specify whether you're using CAL- or core-based licensing), you're not testing the exact performance or functionality you'll get in real production systems, and again you'll have a surprise waiting for you when you do.

What Am I Expecting Here?

Now I don't think it's reasonable to ask for any of the actual licensing policies to change, and I'm sure they anticipated certain objections when they decided on this path in the first place. So I don't think that would do us any good. What I would like to see, however, is better disclosure. Those licensing reps and account managers should never let a customer buy software assurance or upgrade directly to SQL Server 2012 under CAL without verifying that they will be eligible based on the number of cores in their server(s). Assuming that they have read some footnote on a web page and are completely aware of the issue is the opposite of customer service.

More importantly, my expectation here is that I hope to prevent other customers from rushing into Software Assurance contracts for SQL Server 2008 R2, with the expectation that they can upgrade to SQL Server 2012 and keep their CAL-based licensing regardless of the size of their servers. When you get into conversations with your account manager, *please* be sure that you discuss this issue, and get their promises in writing.

As some of you already know, I've provided some feedback to Microsoft about this, and gave them a 24-hour grace period to respond. In fact I gave them more than 36 hours before hitting publish, but I have yet to receive a response. I'll update this space when I hear anything.

So given the excessive increase in costs, do we need to upgrade to 2012? We're planning on replacing our database server next year, can't I just install 2008 R2 and use our existing license on the new server?

I'm late for every party 🙂 With the dawn of 12 core, 24 Lcpu per socket servers we wanted get geared up for performance testing MSSQL 2012 on 48 Lcpu. Our MSDN license was ENT + CAL, took a little while to determine this was why the server would only get 82% CPU busy. Once we looked at per-cpu stats it was ALMOST obvious 🙂

Yesterday's legal battle is today's victory. If you had SA on your Server + Cal model before April 1st, 2012 then you can ask Microsoft for Amendment ID M145. Here is the part of the Amendment that you want:

"Notwithstanding anything to the contrary in the Product Use Rights, if SQL Server 2012 Enterprise is run in a physical Operating System Environment (OSE) on the licensed server, that physical OSE may access any number of physical cores."

Everyone here is missing the point. Go read your Volume License Agreement with Microsoft – If you have SA then Microsoft is legally required to upgrade you to the Core version of SQL 2012 Enterprise and allow you to use more than 20 cores. At least that is the stance my legal team is taking and today we have sent a demand letter to Microsoft. Microsoft has opened them up to a class action lawsuit on this matter and we intend to preserve our rights under our Volume License Agreement.

Here are the sections that your legal team needs:

Section 5b states:

“No detrimental changes for Enterprise Products. If a new version of an Enterprise Product has more restrictive use rights than the version that is current at the start of the applicable initial or renewal term of the agreement, those more restrictive use rights will not apply to the Customer’s use of that Product during that term.”

Section 16c states:

“Order of precedence. In the case of a conflict between any documents identified in the first page that is not resolved expressly in the documents, their terms will control in the following order: (1) these terms and conditions and the accompanying signature form; (2) the Product List; (3) the Product use rights; and (4) all orders submitted under this agreement.” #1 refers to your Volume License Agreement.

B thanks for the update, though I'm not quite certain that multiple SQL Server instances will know about each other, and one will know the other is also constrained to 20 cores, and use 20 *different* cores as a result. I'm not even sure how feasible that is to pull off technically unless you manually set affinity (but again, I'm not sure that you can set affinity at all if SQL Server doesn't see beyond core 20 on startup). It's also hard to imagine how this would work on, say, a 24- or 36-core box.

Not sure if this changed since the original article or if it is even correct, but this is from microsofts Compute Capacity Limits article.

These limits apply to a single instance of SQL Server. They represent the maximum compute capacity that a single instance will use. They do not constrain the server upon which the instance may be deployed. In fact deploying multiple instances of SQL Server on the same physical server is an efficient way to use the compute capacity of a physical server with more sockets and/or cores than the capacity limits below.

http://msdn.microsoft.com/en-us/library/ms143760.aspx

Good bless this June 30 transition date.

Kid you not, to upgrade my machine to 2012 using Enterprise on 2 processors @ 4 cores each is $71,000 on SELECT using the Core model. The Server + CAL model for 50 users will only cost me $14,000. This is a money grab by Microsoft, pure and simple.

The true evil in the new core based model is the requirement that if you have X hardware capability then you must by Y core licenses. Pure EVIL.

For example a machine that's 2 processor 4 cores each. Even if your load only requires the use of 2 cores, you can't just buy two core licenses for that machine… oh no, you must by 8… It's ridiculous. More than happy to be getting our 2012 before the cutoff and riding the next wave to the 2016 without getting stabbed in the back.

If the core licensing was sold as how the processor model was sold, there wouldn't be an issue; but this is a clear money grab on Microsoft's part, and it's going to backfire.

Aaron,

What about this statement found in the very same page two bullets before?

"To help with the transition to the new licensing model, the SQL Server 2012 Enterprise Edition will be available under the Server + CAL model through June 30, 2012."

Is really Enterprise CALs available unitl June, 30? If yes I've been foolishing all my clients just because Microsoft isn't crystal clear about their new license model.

I even watched webcasts (yes, more than one) about the new model and none talked about CALs being available to Enterprise.

Regards.

My frustration is not coming from the 'per core' part so much as from the *MEMORY* limits they put on Standard Edition.

I use 16GB -at home- 64GB in no time will seem like a JOKE and would I have to move to Enterprise at 10 TIMES the cost only because I want more memory ?

In regards to Denis comment:

" If you move to one of the free database platforms you'll be looking at a pretty heavy drop in available features when you move."

I could not disagree more:

Postgres is FREE, very stable currently and Opensource. It can handle

64 PROCs with high scaleability : http://rhaas.blogspot.com/2012/04/did-i-say-32-cores-how-about-64.html

and in terms of features 9.1 ROCKS:

http://www.postgresql.org/about/featurematrix/

I have worked with MS SQL Server for _many_ years but this 10x increase in prices with ridiculous limits in Standard Edition is making us look elsewhere.

Postgres is not the same it used to be. It is *much better* now !!

Good luck MS

Daniel, I disagree. What I expect (and what the specific customers I'm talking about expect) is that when they talk to their official license / account rep, they'd get some kind of disclosure about this. People with SA who are running 2008 R2 + CAL and then upgrade through SA do not expect to be surprised by the fine print.

Note that I did not say Microsoft did not communictlate this. It's clearly in the docs but I think they could have done better there and their reps *absolutely* can do better.

I don't agree with the statement that the license change for Enterprise CAL model with restriction to 20 core was not communicated good by Microsft.

Since a few weeks ago Microsoft had published 2 papers about licensing SQL Server 2012 and clearly statet the 20 core limitation in Enterprise/CAL model and the link to those papers was present prominently on the page about SQL 2012 editions.

The real problem is that almost all peoples don't read license agreement and licensing whitepaper and therefore often violate the license.

Personally I don't think the Standard or BI edition of SQL 2012 is a valuable alternative for the Enterprise edition as the features of enterprise editions are missing. Especially the BI edition is likely unusable as it BI often come together with lot of data/large database and the BI does not support data compression and column store index. I guess the the BI edition will disappear in the future or some enterprise features (data compression, column stored index) will be added

I personnal think it was stupid for MS to take away one of the factors for competing against other vendors – cost. These cost changes as well as feature changes in 2012 are going to hurt them in competing against the likes of Oracle and DB2 while at the same time reducing the defenses against MYSQL and other lower cost RDBMS.

Hi Aaron,

Great post, and I agree that more transparency about licensing will help here.

:{>

Thanks Aaron, a very important piece of information, I can see many companies tripping up over this.

M,

Yes the standard edition (and the BI edition) do the exact same thing with regard to the number of cores that can be used.

Frustrated,

Assuming that you move to Oracle, DB2, etc. you can expect the same per core license policy. If you move to one of the free database platforms you'll be looking at a pretty heavy drop in available features when you move.

All,

Look for a licensing blog post from me soon that talks about some of the joys of AlwaysOn Availability Groups and licensing.

Denny

M,

YES! That was precisely my point (it just took a lot of background to get there). I'm hoping to raise visibility to this so that customers (or consultant acting on behalf of customers) are aware of the issue even if their reps are not being, let's say, honest and forthright.

I am not sure about the enforcement of core count in Standard, as I don't have access to a retail or VL Standard SKU (only MSDN, which I don't think plays by the same rules at all), but I'd suspect that if they can put that enforcement into the Enterprise CAL Edition, they can also put it into the Standard Editions (it would be tied, similarly, to the license key). So I can't answer with any level of confidence, but I really think those customers might be in for the same rude awakening…

The main problem here is that the licencing reps don't seem to understand or care to communicate the difference in the licencing model.

Does anyone know if the core limits on standard edition are enforced in the same manner? I am referring to the 16 core limitation referenced here: (http://msdn.microsoft.com/en-us/library/cc645993(v=SQL.110).aspx) MSFT support has given some inconsistent feedback on this topic, but I haven't yet installed 2012 standard edition on an server of this size. Under SQL 2008R2, Standard was licensed for up to 4 processor sockets, which given the core densities on today's servers, many customers of standard edition will be running 20-40 cores for their 2 and 4 way boxes.

Frustrated,

I'm afraid that ship has sailed; the license cost decisions were made a long time ago and they won't be changing the standard policy if some customers threaten to switch platforms (where you'll surely run in to the same type of shift). If you were already paying less for earlier version licensing on that same hardware, then my argument up top still holds to some degree: you got a discount of more than 50% then simply because Microsoft didn't apply per-core licensing yet (but it could have happened in the 2008 or 2008 R2 time frames).

Though, I do agree that graduation could have been implemented here, particularly for the SA/grandfather case. Let the customer with big iron still skate by with CAL licensing covering all of their cores (after all, that's why they bought SA in the first place) with the *advance* warning that the limit will be enforced next round.

Our SPLA costs will jump from $7,300 to $18,400. I agree that we should pay for our power, and we would understand an increase. But a 250% increase? No way. We are investigating other database options to replace SQL Server in our enterprise. And really, who's still on quad core for major enterprise systems? We have 10 8-core processors. On 5 production machines. There has to be something Microsoft can do to gradually increase the costs for us.